If you think the Fed or government agencies know what is going on with the economy, you’re mistaken. Government economists are about as useful as a screen door on a submarine. Their mistakes and failures are so spectacular you couldn’t make them up if you tried.

the economy, you’re mistaken. Government economists are about as useful as a screen door on a submarine. Their mistakes and failures are so spectacular you couldn’t make them up if you tried.

“What will the stock market do this year?”

It seems like a simple question. You might wish for a simple answer to it, and think that people who watch stocks for a living should know that answer. Not so. The evidence shows they are no more accurate than anyone else is.

Morgan Housel of The Motley Fool skewered Wall Street’s annual forecasting record in a story last February. He measured the Street’s strategists against what he calls the Blind Forecaster. This mythical person simply assumes the S&P 500 will rise 9% every year, in line with its long-term average.

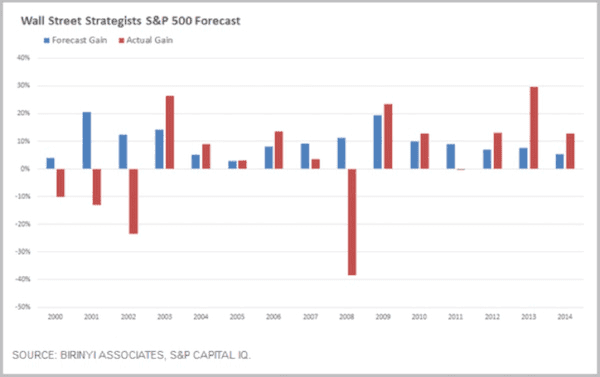

The chart below show’s Wall Street’s consensus S&P 500 forecast versus the actual performance of the S&P 500 for the years 2000–2014.

The first thing I noticed is that the experts’ collective wisdom (the blue bars) forecasted 15 consecutive positive years. The forecasts differ only in the magnitude of each year’s expected gain.

As we all know (some of us painfully so), such consistent gains didn’t happen. The new century began with three consecutive losing years, then five winning years, and then the 2008 catastrophic loss.

The remarkable thing here is that forecasters seemed to pay zero attention to recent experience. Upon finishing a bad year, they forecasted a recovery. Upon finishing a good year, they forecasted more of the same. The only common element is that they always thought the market would go up next year.

Housel calculates that the strategists’ forecasts were off by an average 14.7 percentage points per year. His Blind Forecaster, who simply assumed 9% gains every year, was off by an average 14.1 percentage points per year thus the Blind Forecaster beat the experts even if you exclude 2008 as an unforeseeable “black swan” year.

“Why do investors listen to forecasters who are so consistently wrong?”

I have a guess, but let’s first look at Morgan Housel’s answer…

I think there’s a burning desire to think of finance as a science like physics or engineering. We want to think it can be measured cleanly, with precision, in ways that make sense.

If you think finance is like physics, you assume there are smart people out there who can read the data, crunch the numbers, and tell us exactly where the S&P 500 will be on Dec. 31, just as a physicist can tell us exactly how bright the moon will be on the last day of the year but finance isn’t like physics…like classical physics, which analyzes the world in clean, predictable, measurable ways. It’s more like quantum physics, which tells us that – at the particle level – the world works in messy, disorderly ways, and you can’t measure anything precisely because the act of measuring something will affect the thing you’re trying to measure (Heisenberg’s uncertainty principle). The belief that finance is something precise and measurable is why we listen to strategists and I don’t think that will ever go away.

Finance is much closer to something like sociology. It’s barely a science, and driven by irrational, uninformed, emotional, vengeful, gullible, and hormonal human brains…

I’ll add a twist to Morgan’s answer. I think what many investors really want is a scapegoat. The only thing worse than being wrong is being wrong with no one to blame but yourself. Forecasters keep their jobs despite their manifest cluelessness because they are willing to be the fall guy. Present company excepted, of course.

There used to be a saying among portfolio managers: “No one ever gets fired for owning IBM.” It was the bluest blue chip, one that everyone agreed would always bounce back from any weakness. If IBM made you have a bad year, the boss would understand.

Wall Street strategists serve a similar purpose. If, say, Goldman Sachs forecasts a good year, and it turns out not so good, you will be well-armed for the inevitable discussion with your spouse, investment committee, or board of directors: “I was just following the experts.”

Compare that to the alternative. How does that discussion turn out if you build your own forecasting model and it delivers dismal results? The story probably ends with you sleeping in the doghouse and/or polishing your resumé.

In the short run, hiring a scapegoat, er, forecaster, seems the path of least resistance. That’s why so many people choose it but, in the long run, that path leads you nowhere that you want to go. You will be in fine company as you underperform, but underperform you will.

People also look to forecasts that reward their confirmation bias, reinforcing and validating their understandings of markets and investment strategy. Sadly, I must confess that I much prefer to hear a forecast or read analysis that confirms my own biases. Which is one reason I make sure to read the analyses of those who don’t agree with me.

I typically ignore – for good reason, as we will see below – forecasts based on mathematical models. I much prefer the assessments of those who analyze the future in terms of trends and general economic forces, giving us their own sense of direction about the interplay of the complex drivers of the economy – but that’s just me. the Federal Reserve has a good handle on future growth prospects.

“Does the Federal Reserve have a good handle on future growth prospects?”

All right, so if forecasting the stock market is harder than it looks, how about forecasting the economy? Surely the Federal Reserve has a good handle on future growth prospects. [Well,] if that’s what you think, prepare to be disappointed.

We can’t say the Fed doesn’t try. In 2007 the Federal Open Market Committee (FOMC) started releasing GDP growth projections four times a year. They do this in the same report where we see the much-discussed interest-rate “dot plots.” It is called the “Summary of Economic Projections,” or SEP.

A 2015 study by Kevin J. Lansing and Benjamin Pyle of the San Francisco Federal Reserve Bank found the FOMC was persistently too optimistic about future US economic growth. They concluded:

Over the past seven years, many growth forecasts, including the SEP’s central tendency midpoint, have been too optimistic. In particular, the SEP midpoint forecast:

(1) did not anticipate the Great Recession that started in December 2007,

(2) under-estimated the severity of the downturn once it began, and

(3) consistently over-predicted the speed of the recovery that started in June 2009

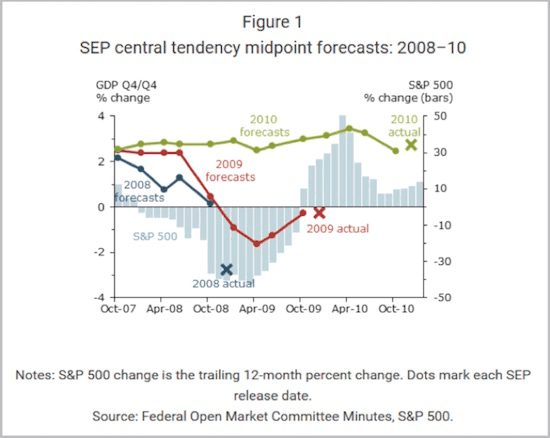

so, it isn’t just Wall Street that wears rose-colored glasses – they are fashionable at the Fed, too. Lansing and Pyle provide helpful charts to illustrate the FOMC’s overconfidence. This first one covers the years 2008–2010.

The colored lines show you how the forecast for each year evolved from the time the FOMC members initially made it. Note how they stubbornly held to their 2008 positive growth forecast even as the financial crisis unfolded, then didn’t revise their 2009 forecasts down until 2009 was underway – and then revised them too low. However, they did make a pretty good initial guess for 2010, and they stuck with it.

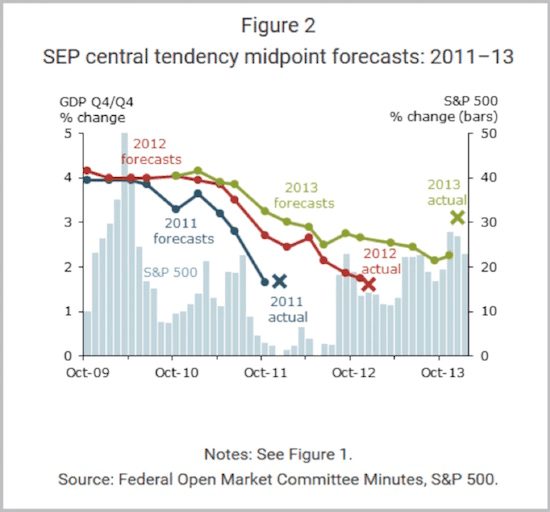

The next chart shows FOMC forecasts for 2011–2013.

We see a different picture in this chart. As of October 2009, FOMC members expected 2011 and 2012 would both bring 4% or better GDP growth. Neither year ended anywhere near those targets. Their initial 2013 forecast was near 4% as well. They reduced it as the expected recovery failed to materialize, but as in 2009, they actually guessed too low.

One problem here is that GDP itself is a political construction. Forecasting the future is hard enough when you actually understand what you are forecasting. What happens when the yardstick itself keeps changing shape? You get meaningless forecasts – but this doesn’t stop the Fed from trying.

“Does the CBO provide more accurate answers?”

If the Fed can’t accurately forecast the economy, can anyone? Surely someone in the federal government has better answers.

The Congressional Budget Office issues forecasts much as the Federal Reserve does and, like the Fed, the CBO grades itself. You can see for yourself in “CBO’s Economic Forecasting Record: 2015 Update.”

Read that document, and you will find the CBO readily admitting that its forecasts bear little resemblance to reality. Their main defense, or maybe I should say excuse, is that the executive branch and private forecasters are even worse.

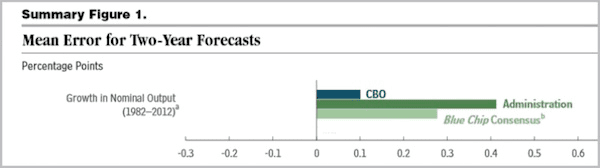

I’m not kidding. The CBO report includes the following chart. I removed other categories they measure so we can look specifically at their GDP estimates. I should also point out that they cooked the books a little by averaging two-year forecasts to make themselves look better – but even so

The bars compare the degree of error in forecasts by the CBO, the Office of Management and Budget (OMB), and the private Blue Chip economic forecast consensus. A reading of zero would mean the average forecasts matched reality. A negative number would mean they were too pessimistic. A positive number – which is what we see for all three entities – means they were all overly optimistic on GDP growth.

What we see is that the OMB – whose director is a political appointee – was more optimistic than the Blue Chip consensus, which in turn was more optimistic than the CBO, which was more optimistic than reality.

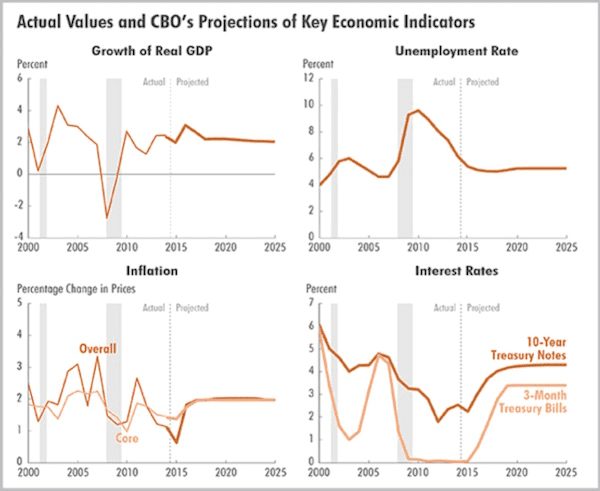

It is also worth noting how the CBO views the future. Their latest economic outlook update, published last August, features this chart.

The forecasts for GDP growth, unemployment, inflation, and interest rates all flatline after 2016. As of now, the CBO’s official position is that the U.S. economy will remain stable with no recession until at least 2025. If you find this particular prognostication hard to believe, you aren’t the only one. Nevertheless, this is what the government agency with the best forecasting record says we should expect.

I’ll go out on a limb here and say that the CBO is wrong. I am 100% certain we will have a recession before 2025. We can debate when it will start, what will cause it, and how long it will last, but not whether it will happen.

“Are economists useless at the job of forecasting?”

I wrote these next few paragraphs three years ago, but what I said then is still true today.

“In November of 2008, as stock markets crashed around the world, the Queen of England visited the London School of Economics to open the New Academic Building. While she was there, she listened in on academic lectures. The Queen, who studiously avoids controversy and almost never lets people know what she’s actually thinking, finally asked a simple question about the financial crisis: “How come nobody could foresee it?” No one could answer her.

If you’ve suspected all along that economists are useless at the job of forecasting, you would be right. Dozens of studies show that economists are completely incapable of forecasting recessions – but forget forecasting. What’s worse is that they fail miserably even at understanding where the economy is today.

In one of the broadest studies of whether economists can predict recessions and financial crises, Prakash Loungani of the International Monetary Fund wrote very starkly, “The record of failure to predict recessions is virtually unblemished.” He found this to be true not only for official organizations like the IMF, the World Bank, and government agencies but for private forecasters as well. They’re all terrible. Loungani concluded that the “inability to predict recessions is a ubiquitous feature of growth forecasts.” Most economists were not even able to recognize recessions once they had already started. In plain English, economists don’t have a clue about the future.

If you think the Fed or government agencies know what is going on with the economy, you’re mistaken. Government economists are about as useful as a screen door on a submarine. Their mistakes and failures are so spectacular you couldn’t make them up if you tried yet now, in a post-crisis world, we trust the same people to know where the economy is, where it is going, and how to manage monetary policy.”

Central banks tell us that they know when to raise or lower rates, when to resort to quantitative easing, when to end the current policies of financial repression, and when to shrink the bloated monetary base. However, given their record at forecasting, how will they know? The Federal Reserve not only failed to predict the recessions of 1990, 2001, and 2007; it also didn’t even recognize them after they had already begun. Financial crises frequently happen because central banks cut interest rates too late or hike rates too soon.

The central banks tell us their policies are data-dependent, but then they use that data to create models that are patently wrong time and time again. Trusting central bankers now, whether in the U.S., Europe, or elsewhere, is a dicey wager, given their track record. Unfortunately, the problem is not that economists are simply bad at what they do; it’s that they’re really, really bad. They’re so bad that their performance can’t even be a matter of chance.

The reason is that they base their models on flawed economic theories that can only represent at most a pale shadow of the true economy. They assume they can use what are called dynamic equilibrium models to describe and forecast the economy. In order to create such models they have to make assumptions – and when they do, they assume away the real world.

It is not so much that the models I am criticizing are useless – they can offer economic insights in limited ways – but they cannot be (successfully) used to predict the economy or stock markets with anything close to certainty. They are simply not complex enough – and they cannot be made complex enough – to accurately describe the nonlinear natural system that is the economy. Such models can at best give you insights into certain conditions that are limited by the assumptions you have to make in order to create the models. If you’re using your models properly, you understand their deep limitations. I freely admit to using models to gather as many insights as I can (especially about relative valuations), but I certainly don’t rely on them to actually predict the future. You should never use a model without understanding in a deep and all-encompassing way that past performance is not indicative of future results.

I’m concerned that, in the coming years, looking at historical data for guidance about the future will be more misleading than simply guessing would be. The times aren’t just changing; the very underlying economic conditions that produced past performance will no longer pertain. We are truly on economicus terra incognita. (Okay I made that one up, but you get the idea.)…

Now that we’ve established that forecasting is worthless, let me make a forecast. When we next have a recession in the U.S., the Federal Reserve will give us QE 4. They are going to base their monetary policy on the data they have at the time, even though all their own research says that the last round of QE really didn’t do anything. They will once again push us into a world of financial repression malinvestment because they will feel the need to “do something,” and about the only thing they will be able to come up with is more quantitative easing which will force the world into yet another mutually destructive round of competitive currency devaluations…

Why Forecasting Is a Crap Shoot

By this point it should be clear that even the brightest economic and financial minds struggle to make accurate forecasts…but I don’t think we should ignore them all because the real problem is timing. I think it is possible to observe events and trends and then to make informed projections about the future – a few people can even do it with reasonable accuracy, at least in their own areas of expertise – but the problem lies in correlating what you know is coming with the particular calendar year in which it will occur.

For instance, note what I said above. I made a recession forecast within a 10-year period. I feel very confident we will have a recession between 2016 and 2025. I can’t tell you exactly what year it will occur, although I will expose the extent of my hubris by actually trying to narrow that range down in just a few paragraphs.

I can readily forecast within a 10-year window because economic trends rarely change overnight. The events that drive national and global economic cycles take time to unfold. Even if we completely ignore present circumstances, we know it would be unprecedented for the U.S. economy to go 10 years without at least a mild recession.

[The above being said,] I’ll readily admit that some people (mostly professional traders) are pretty good at forecasting short-term market movements…[but] they’re also the first to tell you that they don’t bet the house on their forecasts. They know how easy it is to be wrong – and how costly.

“Follow the munKNEE” on Facebook, on Twitter or via our FREE bi-weekly Market Intelligence Report newsletter (see sample here , sign up in top right hand corner)

Related Articles from the munKNEE Vault:

1. Only Fools Base Investment Decisions On Pundit Predictions! Here’s Why

Nobody can predict what the stock market will do so basing your investment decision on market or economic predictions is a fool’s game.

2. This Site Reveals the Performance of Financial Pundits – How Well Has Your Guy Done?

Recently I discovered a website which tracks pundits in finance (and politics and sports). Check it out to see how many of the calls and predictions of your favorite prognosticators have turned out to be true. You’ll be surprised and, no doubt, disappointed!

ChartRamblings; WolfStreet; MishTalk; SgtReport; FinancialArticleSummariesToday; FollowTheMunKNEE; ZeroHedge; Alt-Market; BulletsBeansAndBullion; LawrieOnGold; PermaBearDoomster; ZenTrader; CreditWriteDowns;

munKNEE.com Your Key to Making Money

munKNEE.com Your Key to Making Money